Data Mining in marketing is the practical use of data to identify real SEO growth opportunities, rather than producing reports for their own sake. This approach brings together search, analytics, content, CRM, and user-behavior data to pinpoint where a site is losing visibility and where it can win it back. As a result, it becomes easier to decide which pages deserve expansion, which topics should be added, and which technical issues are holding back organic traffic. The key difference is that the analysis is meant to lead to concrete decisions: what to improve, what to remove, what to create, and what to prioritize. This is especially important for larger sites, where the number of URLs, topics, and queries quickly exceeds what can be assessed manually. In the next section, you will see what Data Mining in marketing is in detail and why first-party data matters so much today.

What is Data Mining in marketing and how does it affect organic traffic?

Data Mining in marketing is the analysis of combined datasets that helps uncover patterns affecting visibility and the quality of organic traffic. In practice, it is not just about checking rankings or visit counts, but about answering specific questions: which content attracts high-quality traffic, where users are not getting the answer they need, and which pages can grow after small adjustments.

The starting point is usually first-party data: Google Search Console, web analytics, a list of subpages, conversion data, CRM, internal search, and sometimes server logs. Once these sources are connected, you can see not only which queries trigger impressions, but also which organic visits lead to engagement, progression to an offer, or a sale. That changes SEO decision-making, because the priority stops being traffic alone and becomes useful traffic.

The impact on organic traffic comes from better alignment between the site and search intent. Data analysis highlights topical gaps, cannibalization, weak CTRs, thin content, incorrect internal linking, or pages that rank but do not answer the user’s question well enough. When these issues are fixed, the site is more likely to grow at the level of entire topic clusters, not just individual keywords.

In practice, Data Mining helps decide what to do next with specific URLs. Sometimes the best move is to expand the content, sometimes to consolidate several similar pages, and sometimes to remove low-value subpages. This approach works best where a site has a lot of content, a broad offering, or a fragmented category structure and it is difficult to evaluate everything manually.

It is also worth remembering that Data Mining connects SEO with content, UX, and sales data. That makes it possible to assess whether a page not only earns clicks, but also moves the user forward. This matters because higher organic traffic without visit quality often does not translate into a real improvement in business results.

Current context and the importance of first-party data in SEO

First-party data in SEO has become essential because it offers the most faithful view of how users arrive on a site and what they do after landing. This refers to data collected in your own systems: Search Console, analytics, CRM, internal search, or a content database. In day-to-day work, it is typically more actionable than external estimates, since it reflects a specific site, its URLs, and its conversions.

Today’s SEO landscape forces a broader perspective than a simple list of keywords. Search results shift quickly, and the position of a single query does not yet indicate whether the user found the right answer or reached the right entry page. Topic clusters, landing page types, and the full journey from an organic entry to a user action are taking on increasing importance.

In practice, this means strong SEO decisions depend on well-ordered data. If goals in analytics are mislabeled, URLs are inconsistent, and content types lack a shared taxonomy, the analysis can become misleading very quickly. That is why, before looking for growth opportunities, it is worth verifying measurement quality, data history, conversion mapping, and page naming conventions.

The value of first-party data is also rising due to privacy requirements and limits on combining datasets from different sources. Not every data point can be paired legally or sensibly, so analysis needs to rest on clearly defined objectives and correctly collected data. A simple but trustworthy model built on your own data is better than an elaborate analysis based on incomplete or inconsistent signals.

For SEO, the key point is that first-party data makes it possible to evaluate not only visibility, but also how well content matches intent. It helps identify pages with strong demand potential that still do a poor job of turning visits into deeper engagement or a conversion. These are usually the areas where the fastest and most cost-effective optimization opportunities are found.

How does the Data Mining process work in practice?

In practice, the Data Mining process works by combining data from several systems, cleaning and organizing it, and turning it into a set of concrete SEO actions. The goal is not merely to produce a report, but to pinpoint which pages are losing visibility, which topics have unmet demand, and where users drop off before the next step. Without standardized data, even technically correct reports can lead to poor decisions. That is why the first stage is to bring order to URLs, page types, categories, and conversion goals.

Most often, data is combined from Google Search Console, web analytics, a CRM or sales system, the list of subpages, and basic technical metrics. This makes it possible to see not only clicks and positions, but also visit quality: engagement, paths into the offer, contribution to conversions, and differences between user segments. It changes how SEO is approached, because what matters is not just the visit from Google, but what happens after the user arrives.

The next step is to validate data quality and pinpoint areas where measurement is incomplete or misleading. The analysis covers URL duplicates, incorrect goal mapping, inconsistent content naming, attribution issues, and anomalies driven by seasonality. If it is unclear which address version is the correct one, or which event represents a real conversion, SEO prioritization becomes arbitrary.

Once the sources are cleaned up, the analysis shifts from individual queries to themes, landing pages, and user behavior. The focus is on patterns rather than isolated signals: pages with high impressions and low CTR, content that attracts traffic but does not drive users further, categories with demand and weak visibility, and clusters the site does not cover yet. The biggest growth opportunities usually sit not in a single phrase, but in a whole group of similar queries and related URLs.

In the final stage, the findings go into an implementation backlog, not a slide deck that changes nothing. That backlog typically includes content updates, page consolidation, internal linking improvements, title and meta description adjustments, technical changes, and new content clusters. Each action should have a priority, an owner, and a way to measure impact after rollout.

Stages of data analysis and identifying SEO opportunities

The stages of SEO data analysis move from gathering sources to identifying which pages and topics have the highest growth potential. First, data is collected and shared identifiers are standardized so that Search Console, analytics, the CRM, and the URL list all refer to the same subpages and content types. Without this, it is not possible to reliably compare clicks, on-site behavior, and business outcomes for a specific address.

The second stage is an audit of measurement quality and the site’s technical health. This includes checking data gaps, response status codes, indexation, duplication, canonicals, pagination, speed, and the mobile version. This is often where it becomes clear that the issue is not the content itself, but signal fragmentation across multiple URL variants or incorrect accessibility of important pages for crawlers.

The third stage focuses on queries and user intent. Phrases are grouped by topic, need type, and journey stage, and then mapped to entry pages. This makes it easier to spot content gaps, cannibalization, pages that target the wrong intent, and areas where the data shows demand but there is no well-matched subpage. Mapping queries to intent and specific landing pages delivers far more insight than looking at rankings alone.

The fourth stage is an analysis of the entry pages themselves and what users do after arriving from organic results. You review CTR, positions, visit depth, movement between informational content and the offer, use of internal search, drop-offs, and assisted conversions. If an article brings in traffic but does not move users forward, the SEO opportunity may not be another new piece, but stronger internal linking, a clearer information structure, or a more explicit path to the category.

The fifth stage assesses content quality against real user questions and the breadth of the topic. You compare answer completeness, heading structure, information freshness, structured data, and whether the content addresses questions visible in queries and in internal search. Content often loses not because it is too short, but because it skips key questions or nudges the user in the wrong direction.

The final stage is potential segmentation and action selection. Pages, topics, and clusters are divided by business impact, implementation effort, current visibility, and data quality, and then the work is sequenced. Strong SEO opportunity identification ends with a short, implementation-ready priority list, not a long list of observations.

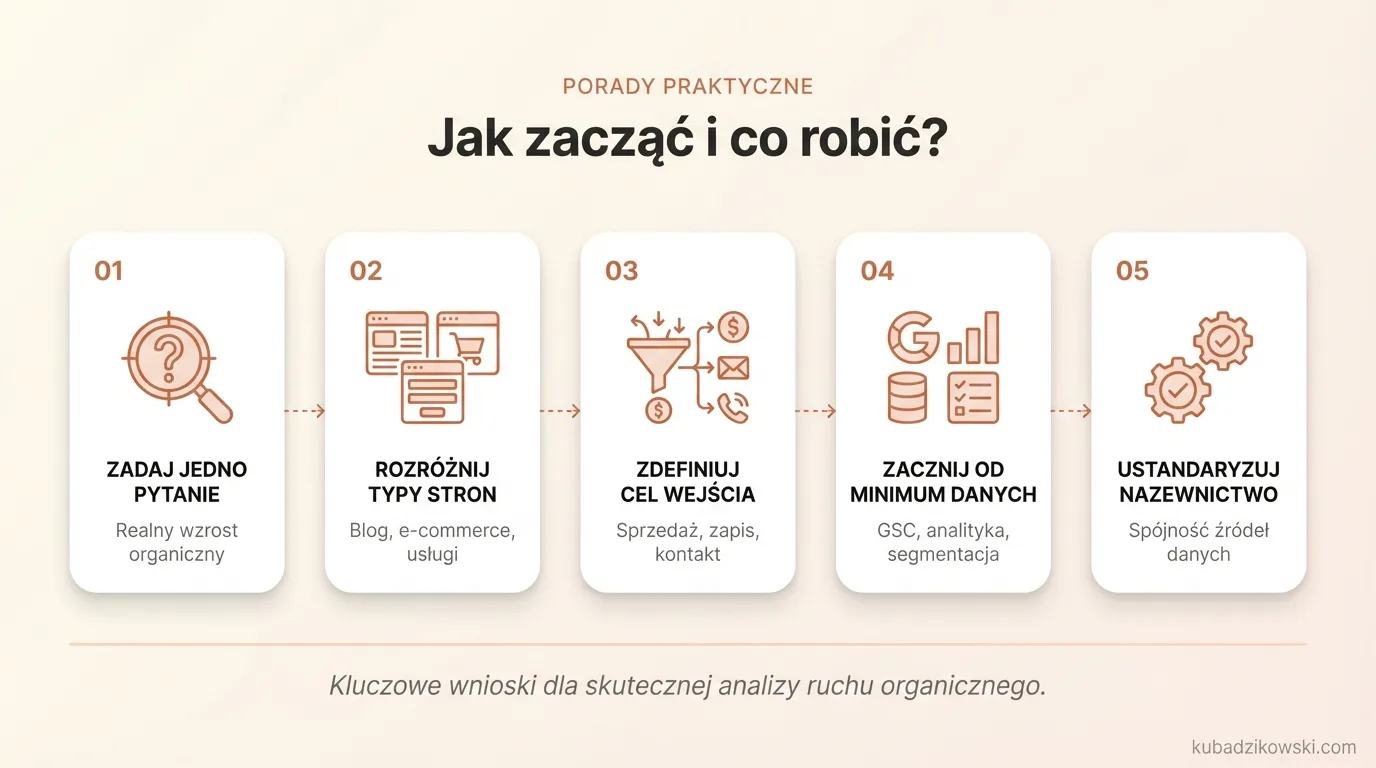

Practical tips: How to start and what to do.

It is best to begin with one business question: what organic traffic growth can realistically help the company, and on which page types. A blog is evaluated differently than e-commerce categories, and differently again from service pages that generate leads. If you do not define up front which entry points should drive a sale, a sign-up, or a contact, the analysis quickly turns into a collection of trivia.

At the start, you do not need an elaborate data ecosystem, but you do need the minimum required to make decisions. That typically means Google Search Console, analytics with properly configured goals, a list of URLs segmented by content type, basic lead or sales data, and insights from internal search. If these sources are inconsistent, first standardize the naming of pages, categories, and conversions.

The next step is connecting queries to user intent and a specific landing page. Do not assess keywords in isolation from the landing page, because then you cannot tell whether the issue is missing content, a weak page structure, or poor alignment between the answer and the query. The most value comes from analysis at the topic-cluster and entry-page level, not from a single phrase.

It is worth setting priorities where three signals overlap: there is search demand, the current content does not properly match the intent, and users rarely move on to the offer or the next subpage. This approach makes it easier to identify pages with stronger potential than areas that generate high traffic but deliver low-quality visits. In practice, improving a handful of key landing pages often produces a bigger impact than publishing a large volume of new articles.

The outcome of the work should be implementation-ready assets, not a report for its own sake. In most cases, this means:

- a map of topical clusters and intent,

- a list of pages to update or consolidate,

- proposals for new content and missing topics,

- recommendations for internal linking and changes to the category structure,

- a technical backlog and a simple dashboard for monitoring results.

At the end, the delivery conditions need to be agreed. You will need access to data, clearly defined conversion goals, and an implementation owner on the content and development sides. Without clear accountability, even a strong analysis will not translate into increased visibility.

After implementation, do not treat the work as finished. Review the impact separately by topic, URL, and user segment, because demand, seasonality, and search results layouts change continuously. Data Mining in SEO works best as a cycle: analysis, implementation, measurement, adjustment.

Most common mistakes in using Data Mining and how to avoid them

The most common Data Mining mistakes come from working with incomplete datasets, misreading intent, and failing to turn findings into concrete implementations. In that situation, a company collects plenty of information, but cannot decide what to fix first or how to assess the outcome. The issue is not the absence of data, but the lack of data that is organized well enough to support decisions.

The first frequent mistake is judging success only by traffic growth. Traffic alone does not indicate whether the user found the right content, progressed further, and completed a meaningful action. That is why visibility and clicks should be paired with engagement, transitions to the offer, leads, or assisted sales.

The second mistake is ignoring search intent. A page may rank, but if it answers a different question than the one the user is asking, CTR and traffic quality will remain weak. Instead of copying a competitor’s topic, check what your site genuinely lacks: answers, information structure, examples, comparisons, or a clear path to the next step.

The third mistake is implementing changes without a before-and-after measurement. If you do not capture a baseline for clicks, CTR, positions, conversions, and on-site behavior, it becomes difficult to separate the effect of changes from seasonality or an algorithm update. In practice, a simple reference point for the most important URL-s and topical clusters is enough.

Organizational issues also tend to recur:

- inconsistent tagging and different names for the same page types across multiple systems,

- incorrect conversion mapping or no distinction between micro- and macro-conversions,

- no clear owner for content, SEO, and development actions,

- a one-off analysis with no follow-up validation of the results.

The simplest way to avoid these mistakes is to narrow the scope and work in stages. Start by picking one segment, such as product categories or how-to articles, tidy up the data, and verify which changes actually move the needle. It is better to thoroughly analyze and implement one high-impact area than to cover the whole site broadly without the ability to act.

Why is data analysis an ongoing process, and how do you monitor it?

Data analysis is ongoing because organic traffic shifts along with demand, content changes, user behavior, and the search results themselves. A page that is growing today may lose visibility in a few weeks due to an intent shift, new competition, or a technical issue introduced during a rollout. A one-time audit shows a snapshot, but it does not give you control over what happens next. The real value is not the analysis alone, but the cycle: identifying an issue, implementing a change, measuring the impact, and refining again.

In practice, monitoring should work on three levels: topics, specific URLs, and business segments. A rise in clicks alone is not enough if the growth comes from informational content while transitions to the offer, leads, or assisted sales do not improve. That is why it helps to look beyond visibility and assess traffic quality and what users do next on the site.

It is best to build a simple dashboard that highlights week-over-week and month-over-month changes. It does not need to be complex, but it should connect data from Search Console, analytics, and business goals. If a report does not lead to a decision, it is only an archive of numbers.

- clicks, impressions, CTR, and average position for topic clusters and key landing pages,

- the number of URLs generating traffic and the share of new pages in total organic traffic,

- post-entry engagement: navigation to subsequent pages, use of filters, internal search, drop-offs,

- micro- and macro-conversions from organic traffic, also at the content-segment level,

- technical signals: indexation, response errors, duplication, speed drops, mobile issues.

It also matters to separate leading indicators from final outcomes. Early signals include improved CTR, more queries ranking in top positions, stronger entry performance on refreshed pages, or longer user paths. The final outcome is the impact on leads, sales, offer inquiries, or other goals, so it is not worth judging changes too early.

A solid monitoring process means tagging deployments and comparing metrics from before and after the change. If you update a category, consolidate several URLs, or expand a content cluster, record the date, the scope of work, and the expected outcome. Without that documentation, it is easy to mistake the true impact of a release for seasonality, a paid campaign, or a broader market trend.

Not every change needs an immediate response, but each one should have an owner and a clear alert threshold. Typical signals include a drop in clicks on key pages, more sessions without conversions, a sudden decline in CTR, or the loss of visibility across an entire cluster. A steady operating cadence tends to work best: regular data reviews, a short priority list, and quick fixes where the business impact is highest.